Following on from my last post looking at AI and assessment (see here) where I focussed very much on the high stakes world of terminal exams and coursework, I would now like to look towards formative assessment and the learning process. As with my last post, this post aims at sharing some of the points I made at a recent conference where I spoke on AI and Assessment, presenting some questions which I believe we need to increasingly consider in a world of AI and generative AI solutions.

AI Supported Learning

Learning platforms and computer based learning have existed for some time. And they havent and dont look like the image here. I remember having to do some Maths learning during my teaching degree using a computer based learning platform and that was in the mid to late 90’s. At the time I wasn’t that fond of these learning platforms and this feeling stayed with me. My issue was that the platforms although offering differing routes through the broad content, were largely linear in their offering in relation to each topic or even the smaller units of learning. This couldn’t compare to a teacher delivering content where they could see students struggling and then instantly seek to adjust the learning content accordingly.

We have came a long way from there, with AI and generative AI now able to provide us with far superior learning platforms with my sense being that these platforms tend to break into two types, one where the AI is analysing usage and interaction data to direction learning content creators and one, the more recent and emerging type, where generative AI provides an AI based support, teaching or coaching agent.

In the model where the platform analyses usage and interaction data the key benefit is that this data is gathered from all users looking for those common patterns or anomalies, looking at issues such as general, language, nationality, and a variety of other factors to find which learning content works and which does not. This allows creation of effective learning content based on a huge amount of data across many schools and many learners, far beyond the data that a teacher may have at their hands. As such the content in these platforms progressively improves over time and based on data rather than intuition or other less tangible factors, which may be wrong, which a teacher may rely on.

Where generative AI is used students get a chat bot which prompts and support students as they work through the learning content, with the AI trying to mirror the supportive and coaching role of a teacher, but individualised for each student and available any time, anywhere assuming access to a device and internet connection. I feel it is here that there is the greatest potential especially in relation to more fundamental skills and knowledge development, freeing up teachers to focus on more advanced concepts and also on wider issues such as resiliency, leadership, interpersonal skills, wellbeing, etc. I note recently reading a post about a school which uses AI where they don’t have “teachers” instead having “guides”. I suspect this sounds more radical that it is in practice especially the reported comment by the co-founder that “we don’t have teachers”. My view is that AI learning platforms wont replace teachers, however through the use of AI learning platforms working with teachers we may be able to achieve more and quicker with our students. I suspect the school is more akin to this partnership that the report would suggest however have no first hand experience of the school so cannot be sure.

Challenges

AI as a tool to assist and maybe guide and deliver learning delivers a number of benefits however I think it is important to acknowledge some of the challenges and risks. We may not have a solution at this point however at the very least we need to be aware.

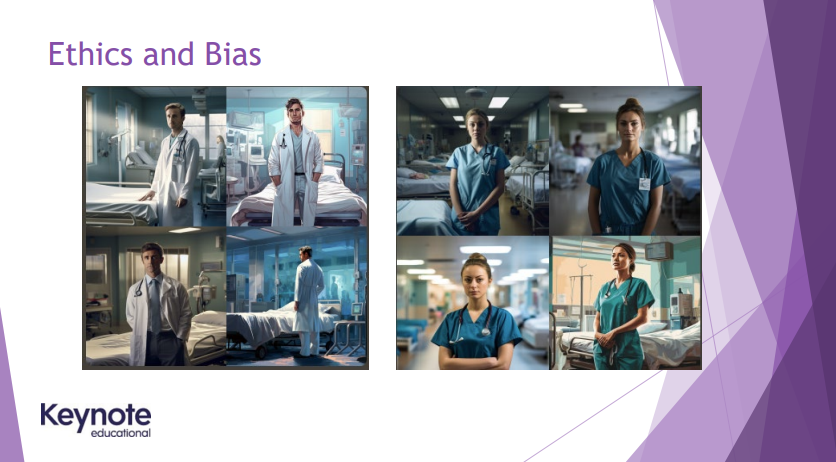

Bias is a clear challenge and something which has been widely reported in relation to AI. In my session I asked a generative AI solution for a picture of a nurse and a picture of a doctor which the solution returning images where the doctor images were all of males and the nurse images all of females, and where all the images where of white people. This experiment clearly shows bias however the challenge in AI powered learning platforms is that the bias may not be so easily visible. What if the platform decides based on statistics that students from particular area, nation, gender, preference, age or other characteristic do generally worse than average. The platform may then present them content it believes to be appropriate to this ability level, in doing so impacting their ability to achieve, the challenge they receive, and possibly causing a self-fulfilling prophecy. And when a parent asks regarding a students learning path, is it ethical to use learning platforms if the use of a learning platform means we may not be able to explain the decisions taken in the child’s learning experience and journey, where these decisions were taken by AI?

Data is another challenge we need to consider here in the possible huge and growing wealth of data learning platforms might gather in relation to students. This isnt just the data a school might provide such as name, email and age, but the data produced through each and every interaction with the platform, plus the data gathered as diagnostic data such as the device being used, IP address, etc. And then there is the data a platform might be able to infer from the data gathered; Could an IP address, which suggests a rough geographic location, a device type and internet speed allow you to infer the wealth of a user or users family? I suspect it could. Now consider the massive amount of data gathered over time, across different curriculum subjects and each use of the platform; The potential for inference grows with each additional data point. How do we manage the risks here in relation to data protection, cyber risk and also accidental or purposeful mis-use of the data? If we are to use AI assisted learning solutions I think we need to ensure we have considered how we might do this safely.

Conclusion

Educations has had its challenges for some time including teacher recruitment, teacher workload and wellbeing, and equity of access to education. Maybe AI can help with some of this and maybe AI risks making things worse in some areas; It is difficult to tell, although the one thing we can tell is that AI is here and here to stay so I think we need to make the most of it and shape its use to be as positive and powerful as it potentially can be. A difficulty here however is the slow pace with which education changes (little has changed in almost 100yrs!). Now the pandemic did cause some change in my view, but some of that has rubber banded back to pre-covid setups. The question now is, is AI the next catalyst for education change, will it impact education as much or more than the pandemic and will its impact be persistent beyond the initial “shiny new thing” period. Only time will tell although my sense is there is potential for AI to answer in the affirmative to all three questions.

References:

A Texas private school is using AI technology to teach core subjects; A. Garcia (Oct, 2023), CHRON, Texas private school replaces teachers with AI technology (chron.com)