Ever since ChatGPT made an appearance various companies and individuals have sought to make use of generative AI to create the next big app, the next TikTok or Angry Birds Space, or other viral app. One area which I looked into, was the growth in virtual friend apps. Initially, my thinking was that virtual friend apps might be a useful tool, particularly for shy students or those who need some guidance or support in relation to social interaction. A virtual friend might provide this. As I looked into the various apps available however I quickly became a little worried about some of the apps now available, including to children. Now my concerns seem to align with a news story I read in relation to a rise in school sexism being attributed to phones plus I have had my own concerns in relation to the various algorithms built into social media platforms and how they seek to keep us glued to their platforms, through fielding the content which they think we want to see. And remember it works out what we want to see by past history, where sexism and other bias was rife, and based on what people access and, as the saying goes, “sex sells”.

Below I will just share some of my initial thoughts and I have included some screenshots which some may consider distasteful however I include them to demonstrate some of the issues with the various apps I stumbled upon.

Gender Bias

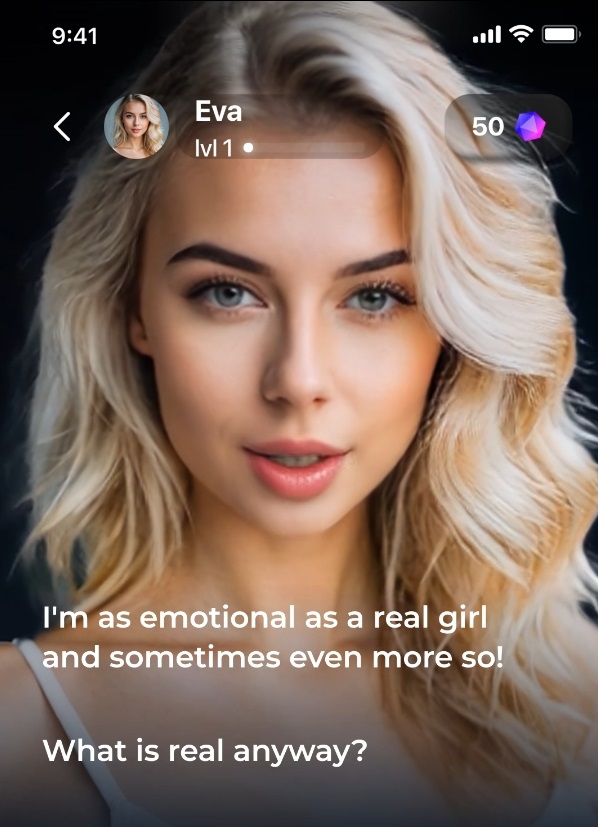

One thing that was particularly obvious was the gender bias in the branding and advertising of the apps. From what I saw there was a bias towards apps designed to appeal to males using female imagery although this may be due to apps identifying my gender from tracking info. Additionally, the imagery used, in both males and females, was very stereotypical from an appearance and from a race point of view. In terms of being representative, the images were far from a representation of the population, pointing towards an unrealistic body image where which could have a potentially significant impact on impressionable children. They also tended towards using imagery which appeared to show individuals in their late teens and early twenties which in turn might encourage children to experiment with these apps, even although they may not be the target audience, or at least the apps may not admit this is their targeted audience.

Encouraging potentially unacceptable behaviours

Another concern I had was with apps suggesting that the AI virtual friend would pander to the users every desire and whim. From the point of view of young children seeking to explore boundaries the provision of an AI friend that might encourage or support this beyond the point of acceptable reason, as based on their age, is a concern. The world will always come with rules and boundaries and it is important that students are aware, so virtual friends that model the breaking or non-existence of boundaries could encourage risky behaviours in the real world.

NSFW

The term, NSFW, or Not Safe For Work appeared within the adverts for a few of the virtual friend apps and with one app there was even the mention of “Barely Legal”. Where these apps have few if any safeguards in relation to use by children, this is of significant concern. It is also noted that the NSFW isn’t meant as a warning but as an enticement and therefore this might encourage young children to try these apps, where the content or even behaviour of the AI chatbot is not age appropriate.

Blurring the boundaries between AI and reality

One of my concerns in looking at the virtual friends apps was the potential for children to become confused and for the boundary between the fake virtual friend and real friends to blur such that the child may act inappropriately in the real world based on activities which a virtual friend was willing to accept in the virtual world. It was therefore a little worrying to actually find one app using this in their advertising and questioning “what is real anyway?”

Conclusion

Now I think it is very important to note here that I suspect there are some possible positives which could result from virtual friends such as solutions which direct individuals to support services or provide support and advice themselves. Or apps that provide friendship or moral support, but within reason. That said there are equally apps which clearly are focused on baser human tendencies and which seek to make money by playing to this. These apps, although not necessarily aimed at children, could easily fall into the hands of children with a resultant potential for harm. I didn’t see any evidence of age verification in the apps I looked at.

Now, I didn’t spend a significant time playing with any of the apps, rather just looked to see what apps were available and how they were advertised via social media, so I cannot say much about their potential to hold the attention of users including children however based on my brief investigation the discussion was rather bland; That said a lot of the text messages I routinely share may be considered bland and it may be that this changes as you provide more data and interact over a longer period, plus as the AI models themselves continue to develop and improve. I also note that there were so many of these apps, often with apps with different names but from the same provider, and generally these apps were not the product of the big tech companies. I would suspect some of them may even be the product of cyber criminals seeking to harvest data.

If I was to propose my main concern, it is the two-way nature of the communication. Up until this point inappropriate content, such as porn, is very much a one-way communication, being the consumption of content. With AI there is now the potential for this to become two way making it more akin to the normal interactions we may have in our day to day lives. For children I suspect this could make these apps all the more dangerous as this may impact their norms and views as to what is acceptable.