Following my DigiFest session I thought I would share some thoughts which went into my session.

It is important to firstly acknowledge that our views on technology are very much the result of our experiences. My experiences include learning to code in Basic on the Commodore 64 at an early age, before moving on to AMOS basic on the Amiga and then QBasic, Visual Basic and C++ on the PC. This early use of technology, and the ability to develop software to solve problems has very much shaped my views. Now, today I walk around with a mobile phone with over a million times more memory than my commodore 64, from less than 30 years earlier, and the growth rate across the period has not been linear. A perfect illustration of this lies in how long it took various technologies to reach 50 million users. Radio took 75 years whereas TV only took 38 years. Bringing us close to today, Facebook got the time to 50 million users down to 3.5 years before Pokemon go managed it in less than a single month. It is clear from this that the pace of changing is quickening.

It is important to firstly acknowledge that our views on technology are very much the result of our experiences. My experiences include learning to code in Basic on the Commodore 64 at an early age, before moving on to AMOS basic on the Amiga and then QBasic, Visual Basic and C++ on the PC. This early use of technology, and the ability to develop software to solve problems has very much shaped my views. Now, today I walk around with a mobile phone with over a million times more memory than my commodore 64, from less than 30 years earlier, and the growth rate across the period has not been linear. A perfect illustration of this lies in how long it took various technologies to reach 50 million users. Radio took 75 years whereas TV only took 38 years. Bringing us close to today, Facebook got the time to 50 million users down to 3.5 years before Pokemon go managed it in less than a single month. It is clear from this that the pace of changing is quickening.

Looking at our use of technology today we find that most of us now use technology for communication or entertainment in the form of mobile phones, social media and on-demand TV. We are also increasingly being required to use technology to access governmental services, council services, banks, etc. Technology is now integral to our lives and here to stay, complete with the ever-quickening pace of change mentioned earlier.

The more I think about the pace of change and the way that technology is becoming an integral part of our everyday lives the more the movie Ready Player One comes to mind. In the movie Wade Watts makes use of virtual reality to live a double life, living as Percival in VR. As the film progresses it becomes clear that his two lives aren’t as separate as he would like and that events in virtual reality impact on real life and vice versa. For us, like Wade Watts, our lives in real life are inseparably linked to our digital lives. In fact, I believe that it no longer serves us to think of digital citizenship as the term implies that there is something else available, a non-digital citizenship, when in fact there is not. Possibly the discussion should not be of digital citizenship at all but simply citizenship. As Danah Boyd, in her book, Its Complicated said, although the apps might change our online connectedness, our need to share and the challenges around privacy are “here to stay”.

The more I think about the pace of change and the way that technology is becoming an integral part of our everyday lives the more the movie Ready Player One comes to mind. In the movie Wade Watts makes use of virtual reality to live a double life, living as Percival in VR. As the film progresses it becomes clear that his two lives aren’t as separate as he would like and that events in virtual reality impact on real life and vice versa. For us, like Wade Watts, our lives in real life are inseparably linked to our digital lives. In fact, I believe that it no longer serves us to think of digital citizenship as the term implies that there is something else available, a non-digital citizenship, when in fact there is not. Possibly the discussion should not be of digital citizenship at all but simply citizenship. As Danah Boyd, in her book, Its Complicated said, although the apps might change our online connectedness, our need to share and the challenges around privacy are “here to stay”.

Resulting from this new technology there are benefits or potential benefits and we need to acknowledge this. A couple of examples include the current exploration of self driving vehicles plus the recent use of choreographed drones as an alternative to traditional new years day fireworks. In relation to current events around the globe, there is also the use of Artificial Intelligence (AI) to identify new antibiotics and other drugs. We need to prepare to make the best of these new opportunities and to ensure the students in our educational establishments are prepared.

But with the above benefits, there are also risks. Fake news and the ease with which videos including interviews can be faked will increasingly make it more difficult to tell fact from fiction. We also have challenged to individual privacy and risks around habits and potential addictive behaviour plus also the potential for platforms to go so far as to actually shape and influence human behaviour.

The danger in the benefits and risks of technology is the currently common resultant binary views of either technology as infinitely good or inherently bad and evil. Sadly, these views are seldom of little use as to view technology as purely good is naïve whereas to consider it as purely negative equally naïve and simplistic. The reality is that technology and more particularly the use of technology for a given purpose will lie in between the extremes of good and evil, positive and negative. Any use of technology is likely to have its positives but also its drawbacks or unintended consequences and therefore we need to consider carefully the pro’s and cons and seek a balance.

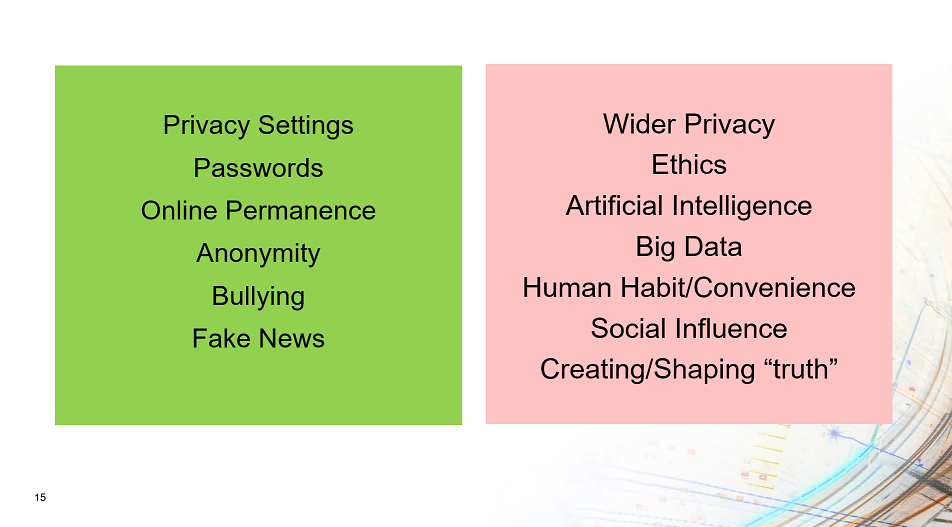

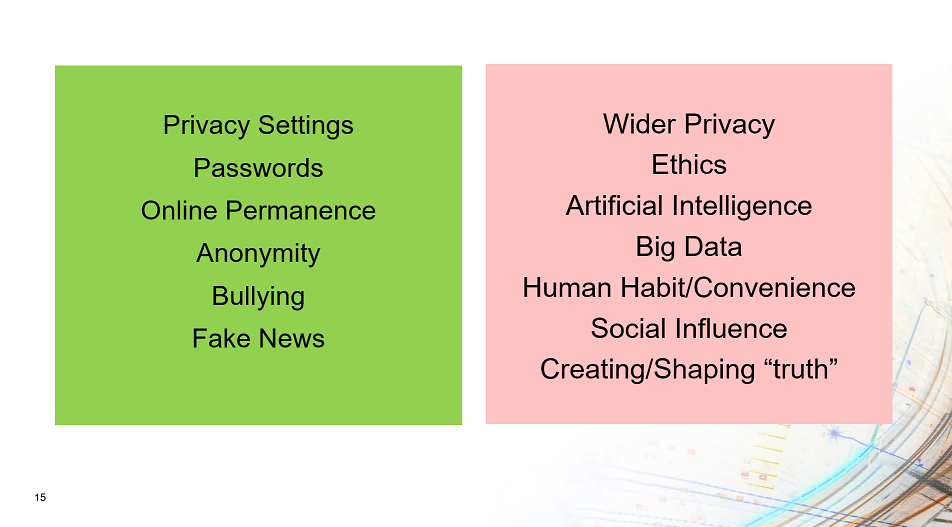

Looking at how we prepare our students for the world and the issues listed above I can see the things which we do satisfactorily, through our eSafety programmers, however I can also see those areas where little or nothing is currently offered. We currently discuss the importance of privacy settings on social media, of having strong passwords, of how online content, once posted, will remain permanent and of the need to be aware of bullying online. These areas are currently covered. Sadly, however little is said in relation to the conflict between user convenience and individual privacy, between individual privacy and public good, and between social media reporting on or actually creating the news and truths which we come to believe. These are the areas which we need to discuss, for which there isn’t a single answer and therefore where the most we can do is help students develop their own views through discussion. It is through discussion that we can hopefully ensure that students, when presented with the infinite challenges of technology use, will approach them with their eyes wide open.

Looking at how we prepare our students for the world and the issues listed above I can see the things which we do satisfactorily, through our eSafety programmers, however I can also see those areas where little or nothing is currently offered. We currently discuss the importance of privacy settings on social media, of having strong passwords, of how online content, once posted, will remain permanent and of the need to be aware of bullying online. These areas are currently covered. Sadly, however little is said in relation to the conflict between user convenience and individual privacy, between individual privacy and public good, and between social media reporting on or actually creating the news and truths which we come to believe. These are the areas which we need to discuss, for which there isn’t a single answer and therefore where the most we can do is help students develop their own views through discussion. It is through discussion that we can hopefully ensure that students, when presented with the infinite challenges of technology use, will approach them with their eyes wide open.

This brings me nicely to raising a couple of examples, from the many examples available, which would make valuable discussion topics for use in our schools.

Algorithms and AIs can be manipulated by an individual or organisation, to their own ends.

Do we understand why algorithms might exist? Do we understand why an individual or organisation might seek to “game” an algorithm and the potential gains which may arise? The use of a series of mobile phones to fool googles traffic analysis algorithm into identifying a traffic jam where one doesn’t not exist, resulting in it redirecting traffic away from a given street, being one simple example of what is possible.

Governments can filter and censor content based on political motivations.

Do governments need to be able to filter content for public safety? But could their filtering be used to shape public perception or to revise fact to their own political ends and political gain? What is truth and should governments be allowed to control and revise truth? We have already seen governments filtering internet content with their filtering then being identified as being lacking transparency and in their own self interest; Filtering of TikTok being one possible example of this.

Online companies can gather and sell your data for profit.

Do companies need to gather all the data which they gather? Do they have the right to sell this data? Where data is anonymized is it possible for data sets to be combined which then might reverse the anonymisation process? A simple example of this being a cellular carrier selling on viewing habit data.

Mary Aiken in her book, the Cyber Effect, identifies the need for us to “make sense of what’s happening” and only through discussion is this likely to occur however one concern I have is where these discussions might happen. In the current crowded curriculum they tend to be banished to the IT classroom, a subject which not all students will study. I don’t think this is sufficient. These discussions need to take place throughout schools, across the subject areas, across the stages, with students, with staff, with parents and with the local community. Discussing the challenges of technology needs to become part of the culture, simply the way we do things around here.

As Danah Boyd stated, “Collaboratively, adults and youth can help create a networked world that we all want to live in”. If I am to ask anything following my session at DigiFest, I would ask this: Lets begin with a discussion in our schools, colleges and universities, any citizenship related discussion where technology has its part to play complete with its pros and cons, but let’s do it today.

You can access my full presentation from DigiFest 2020 here.

Final Note: As we now engage in much more home and distance learning due to the Corona virus it may be more important than ever for these discussions to happen, and to happen now!

The recent issue of Huawei 5G equipment in the UKs 5G infrastructure highlights the challenges of the internet and technology, which often cross international borders, but where the services and hardware is produced by companies which exist clearly within the borders of countries and therefore potentially within the influence of their governments. There is a clear tension here between the services provided to the internet and the companies providing them.

The recent issue of Huawei 5G equipment in the UKs 5G infrastructure highlights the challenges of the internet and technology, which often cross international borders, but where the services and hardware is produced by companies which exist clearly within the borders of countries and therefore potentially within the influence of their governments. There is a clear tension here between the services provided to the internet and the companies providing them. Before the Covd-19 crisis begun I presented on Digital Citizenship at the JISC DigiFest event and previous to that at the ISC Digital event in Brighton. In both cases one of my reasons for presenting was my concern regarding students increasing use of technology not being match by an appropriate considerations or awareness of the risks. I was worried that students were giving away large amounts of data without considering who they were providing to, how it might be used, how long it would be kept or how it might impact or be used to influence them as individuals, and as groups, in their future. I was worried and believed education and its educators needed to start to do something about this.

Before the Covd-19 crisis begun I presented on Digital Citizenship at the JISC DigiFest event and previous to that at the ISC Digital event in Brighton. In both cases one of my reasons for presenting was my concern regarding students increasing use of technology not being match by an appropriate considerations or awareness of the risks. I was worried that students were giving away large amounts of data without considering who they were providing to, how it might be used, how long it would be kept or how it might impact or be used to influence them as individuals, and as groups, in their future. I was worried and believed education and its educators needed to start to do something about this. It is important to firstly acknowledge that our views on technology are very much the result of our experiences. My experiences include learning to code in Basic on the Commodore 64 at an early age, before moving on to AMOS basic on the Amiga and then QBasic, Visual Basic and C++ on the PC. This early use of technology, and the ability to develop software to solve problems has very much shaped my views. Now, today I walk around with a mobile phone with over a million times more memory than my commodore 64, from less than 30 years earlier, and the growth rate across the period has not been linear. A perfect illustration of this lies in how long it took various technologies to reach 50 million users. Radio took 75 years whereas TV only took 38 years. Bringing us close to today, Facebook got the time to 50 million users down to 3.5 years before Pokemon go managed it in less than a single month. It is clear from this that the pace of changing is quickening.

It is important to firstly acknowledge that our views on technology are very much the result of our experiences. My experiences include learning to code in Basic on the Commodore 64 at an early age, before moving on to AMOS basic on the Amiga and then QBasic, Visual Basic and C++ on the PC. This early use of technology, and the ability to develop software to solve problems has very much shaped my views. Now, today I walk around with a mobile phone with over a million times more memory than my commodore 64, from less than 30 years earlier, and the growth rate across the period has not been linear. A perfect illustration of this lies in how long it took various technologies to reach 50 million users. Radio took 75 years whereas TV only took 38 years. Bringing us close to today, Facebook got the time to 50 million users down to 3.5 years before Pokemon go managed it in less than a single month. It is clear from this that the pace of changing is quickening. The more I think about the pace of change and the way that technology is becoming an integral part of our everyday lives the more the movie Ready Player One comes to mind. In the movie Wade Watts makes use of virtual reality to live a double life, living as Percival in VR. As the film progresses it becomes clear that his two lives aren’t as separate as he would like and that events in virtual reality impact on real life and vice versa. For us, like Wade Watts, our lives in real life are inseparably linked to our digital lives. In fact, I believe that it no longer serves us to think of digital citizenship as the term implies that there is something else available, a non-digital citizenship, when in fact there is not. Possibly the discussion should not be of digital citizenship at all but simply citizenship. As Danah Boyd, in her book, Its Complicated said, although the apps might change our online connectedness, our need to share and the challenges around privacy are “here to stay”.

The more I think about the pace of change and the way that technology is becoming an integral part of our everyday lives the more the movie Ready Player One comes to mind. In the movie Wade Watts makes use of virtual reality to live a double life, living as Percival in VR. As the film progresses it becomes clear that his two lives aren’t as separate as he would like and that events in virtual reality impact on real life and vice versa. For us, like Wade Watts, our lives in real life are inseparably linked to our digital lives. In fact, I believe that it no longer serves us to think of digital citizenship as the term implies that there is something else available, a non-digital citizenship, when in fact there is not. Possibly the discussion should not be of digital citizenship at all but simply citizenship. As Danah Boyd, in her book, Its Complicated said, although the apps might change our online connectedness, our need to share and the challenges around privacy are “here to stay”. Looking at how we prepare our students for the world and the issues listed above I can see the things which we do satisfactorily, through our eSafety programmers, however I can also see those areas where little or nothing is currently offered. We currently discuss the importance of privacy settings on social media, of having strong passwords, of how online content, once posted, will remain permanent and of the need to be aware of bullying online. These areas are currently covered. Sadly, however little is said in relation to the conflict between user convenience and individual privacy, between individual privacy and public good, and between social media reporting on or actually creating the news and truths which we come to believe. These are the areas which we need to discuss, for which there isn’t a single answer and therefore where the most we can do is help students develop their own views through discussion. It is through discussion that we can hopefully ensure that students, when presented with the infinite challenges of technology use, will approach them with their eyes wide open.

Looking at how we prepare our students for the world and the issues listed above I can see the things which we do satisfactorily, through our eSafety programmers, however I can also see those areas where little or nothing is currently offered. We currently discuss the importance of privacy settings on social media, of having strong passwords, of how online content, once posted, will remain permanent and of the need to be aware of bullying online. These areas are currently covered. Sadly, however little is said in relation to the conflict between user convenience and individual privacy, between individual privacy and public good, and between social media reporting on or actually creating the news and truths which we come to believe. These are the areas which we need to discuss, for which there isn’t a single answer and therefore where the most we can do is help students develop their own views through discussion. It is through discussion that we can hopefully ensure that students, when presented with the infinite challenges of technology use, will approach them with their eyes wide open. I thought I would share some initial thoughts following day one of JISC DigiFest. The event was launched with a very polished and professional pre-prepared video displayed on screens scattered around the events main hall, focussing on the rate of change in relation to technology and some of the technological implications of technology on the world we live in. The launch session also included a room height “virtual” event guide introducing the sessions and pointing you in the direction of the appropriate hall. In terms of the launch of a conference this was the most polished and inspiring launch I have seen albeit on reflection there wasn’t much particularly innovative or technically complex about it.

I thought I would share some initial thoughts following day one of JISC DigiFest. The event was launched with a very polished and professional pre-prepared video displayed on screens scattered around the events main hall, focussing on the rate of change in relation to technology and some of the technological implications of technology on the world we live in. The launch session also included a room height “virtual” event guide introducing the sessions and pointing you in the direction of the appropriate hall. In terms of the launch of a conference this was the most polished and inspiring launch I have seen albeit on reflection there wasn’t much particularly innovative or technically complex about it. The keynote speaker addressed the changing viewpoints of different generations of people focussing particularly on Generation Z, the generation which currently are in our sixth forms, colleges and universities. I took away two key points from the presentation. The first was how each generations views were shaped by their experiences particularly between the ages of 12 and 20 year old. Jonah Stillman used thoughts on space as an example showing how Generation X might have positive views focussing on the successes of the moon landing whereas Millennials may have a more cynical view following the Challenger disaster. Additionally, Jonah mentioned movies as a social influencer and how those in the Harry Potter generation may view cooperation and trying hard, even where unsuccessful, in a positive manner. Those born later than this may draw on another series of films, in the hunger games, resulting in a greater tendency towards competition and the need to succeed in line with the movies storyline of everyone for themselves and failure results in death. The second take away point from the session resulted from the questioning at the end of the session around what some saw as the absoluteness of the boundaries between generations. I think Jonah’s use of the word “tendency” addressed this concern in that the purpose of the labels was for simplicity and to indicate a general trend and tendency rather than to suggest that all people born on certain dates exhibited a certain trait. It increasing concerns me that this argument keeps coming up when surely it is clear that there is a need to use simplistic models to help clarity of explanation and that no model, not matter how complex will ever truly capture the real complexity of the world we live in.

The keynote speaker addressed the changing viewpoints of different generations of people focussing particularly on Generation Z, the generation which currently are in our sixth forms, colleges and universities. I took away two key points from the presentation. The first was how each generations views were shaped by their experiences particularly between the ages of 12 and 20 year old. Jonah Stillman used thoughts on space as an example showing how Generation X might have positive views focussing on the successes of the moon landing whereas Millennials may have a more cynical view following the Challenger disaster. Additionally, Jonah mentioned movies as a social influencer and how those in the Harry Potter generation may view cooperation and trying hard, even where unsuccessful, in a positive manner. Those born later than this may draw on another series of films, in the hunger games, resulting in a greater tendency towards competition and the need to succeed in line with the movies storyline of everyone for themselves and failure results in death. The second take away point from the session resulted from the questioning at the end of the session around what some saw as the absoluteness of the boundaries between generations. I think Jonah’s use of the word “tendency” addressed this concern in that the purpose of the labels was for simplicity and to indicate a general trend and tendency rather than to suggest that all people born on certain dates exhibited a certain trait. It increasing concerns me that this argument keeps coming up when surely it is clear that there is a need to use simplistic models to help clarity of explanation and that no model, not matter how complex will ever truly capture the real complexity of the world we live in.

I had the opportunity to present at the Brighton ISC Digital EdTech summit during the week. My talk, “Common Sense Safeguarding” focussed on the need for schools to take a broad and more risk based view of online safety as opposed to the previous more compliance driven approach. Given the number and range of technologies students have access to and also the tools available to bypass protective measures put in place by a school, or even the ability to negate them totally through using 4G, online safety is no longer as simple as it once was. This therefore needs a broader view to be taken.

I had the opportunity to present at the Brighton ISC Digital EdTech summit during the week. My talk, “Common Sense Safeguarding” focussed on the need for schools to take a broad and more risk based view of online safety as opposed to the previous more compliance driven approach. Given the number and range of technologies students have access to and also the tools available to bypass protective measures put in place by a school, or even the ability to negate them totally through using 4G, online safety is no longer as simple as it once was. This therefore needs a broader view to be taken.