We now live in a world where, if there is a car accident or a fight or something similar everyone reaches for their phone to film it. No-one, or very few, rush in to help and support, instead the majority whip out their mobile phone, video the event before publishing it online for the world to see, in the hope of going viral.

A positive spin

This can be helpful in getting news out quickly plus can be useful in terms of evidence of actually what happened, hopefully removing subjective memories from the equation, although as I will mention later things are not quite that simple. I remember watching a movie which centred upon the use of video footage and a bloke with a handy-cam to unpick the events leading to a terrorist attack. We now live in a world where everyone pretty much has a camera with them, in their mobile phone, and therefore the chances of doing something criminal and not being recorded are slim, albeit that has just led to a growth in face coverings and hoodies to obscure the identity of those seeking to do ill. But maybe the common access to phone cameras might discourage some from committing crime in which case that can be seen as another positive.

But privacy I hear you say

What privacy do we have where we might get caught on the camera of someone we don’t know, and where they might then publish this online for all to see, all without either our knowledge or our permission? In a world of social media where we publish our own content this happens all the time and we may find ourselves laughing at the person who falls over however how do they feel with our own mistakes captured for eternity online and for the world to watch and laugh at? Also, what about the videos of what happened where only an excerpt is shared online such that the content shared does not convey the context of the event and instead is purposefully picked to suit a particular narrative?

At the edges

There is also the issue at the extreme edges of this balance, where individuals post their arguments with security staff or police online regarding their rights to film in public, or in relation to their right to privacy and not being filmed when involved in a march or demonstration. To the person stating their rights to film in public, I wonder as to what their aim is in filming where security or police feel the need to challenge, and to someone stating their right to privacy, if they are not doing anything wrong and the footage is only for the purpose of policing and identifying those corrupting free speech, etc. again what is their concern? Now I know, again, things are not that simple.

Balance and pragmatism

I often cite balance and will do so here, that having mobile phones and the ease of filming and photographing events presents a benefit but it also presents a risk. The technology is a tool and some will seek to use it constructively whereas others will seek to use it for their own negative ends. Am not sure what the answer is to this, although my personal feeling is we need to be a bit more pragmatic in terms of what is acceptable and unacceptable, and maybe rather than the law leading the way, it is our national culture which should lead the way in terms of what we consider acceptable and unacceptable.

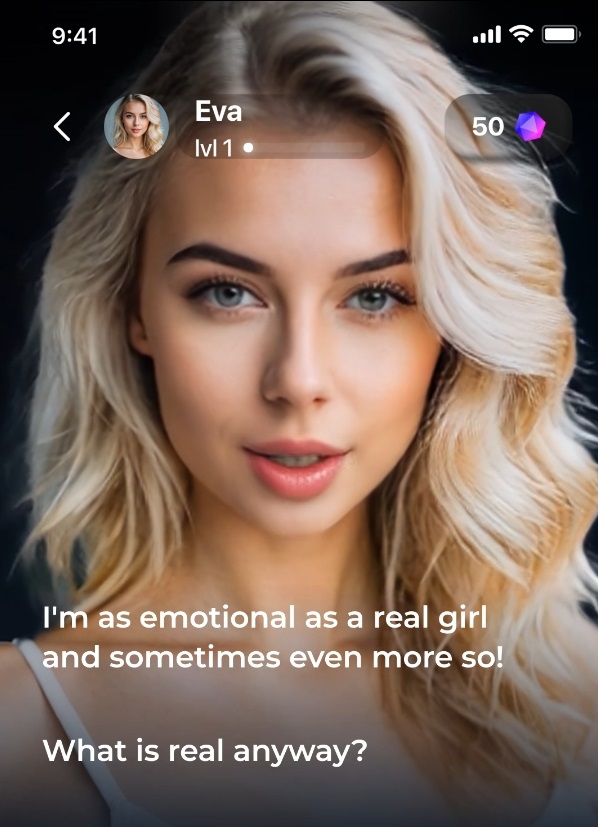

I think the key issue is that the video capture isn’t going away, and in fact it is getting better, higher resolution and also easier to edit with AI tools so the challenges are only likely to grow. And the editing or creation of fake, or synthetic, imagery or footage is a clear and growing concern.It is for this reason that I think this is something we need to talk to students about as part of discussing digital citizenship. What do they think is acceptable or unacceptable and why and how do we build a world where we, in the vast majority, stay on the acceptable side of the fence?