Given the concerns in relation to teacher workload, and you just need to take a quick look at the teacher wellbeing index reports to see this, it is clear that we need to look find solutions to the workload challenge. Artificial intelligence (AI) is one potential piece of this puzzle although is by no means a silver bullet. The issue I have come across on a number of occasions is concerns regarding some of the challenges in relation to AI such as inaccuracies. I avoid talking of hallucinations as it anthropomorphises AI; The reality is that its probability algorithm just outputted something which was wrong so why cant we simply say AI gets it wrong occasionally. And we are right to have concerns about where an AI solution might provide inaccurate information, especially where it might relate to the marks given to student work or the feedback provided to parents in relation to a students progress. But maybe we need to stop for a moment and step back and look at what we do currently. Are our current human based approaches devoid of errors?

I did a quick look on google scholar and found a piece of AQA research from 2005 looking at Markling reliability and the below is the first line in the conclusion section of the report:

“The literature reviewed has made clear the inherent unreliability associated with assessment in general, and associated with marking in particular”

We are not talking about AI based marking here, we are talking about human based marking of work. We are by no means the highly accurate marking and assessing machines we convince ourselves we are. And there are lots of other studies which point to how easily we might be influenced. I remember one study which focussed on decision making by judges where, when they analysed the timing of different decisions, they found that the proximity to a courtroom lunch break had a statistical impact on judges decisions. Like marking, we would expect a judges decision to be independent of the time of the decision, and to be consistent, however the evidence suggests this isn’t quite the case. Other studies have looked at how the sequence which papers are marked in can have an impact on marking, so the marking of a paper following a really good or poor paper, will be impacted by the paper which proceeded it. Again this points to inconsistency in marking. Also, that if the same paper is presented to the same marker on different occasions over a period of time, different marks result where if we were so accurate in our marking surely the marks for the same paper should be the same.

It seems clear to me that we are not as accurate in our marking and assessment decisions as we possibly think we are. I suspect, calling out AIs inaccuracies is also easier than calling out our own human inaccuracy, as AI doesn’t argue back or try to justify its errors, in terms of justifying to us, or even internally justifying how the errors are valid to itself. And this is where a significant part of the challenge is, in that we justify and convince ourselves of our accuracy and consistency, where any objective study would show we aren’t as good as we think we are. When presented with such quantifiable evidence, we then proceed to generate narratives and explanations to justify or explain away any errors or inconsistencies, so overall our perception of our own human ability to assess and mark student work is therefore that we are very good and accurate at it. AI doesn’t engage in such self-delusion.

Conclusion

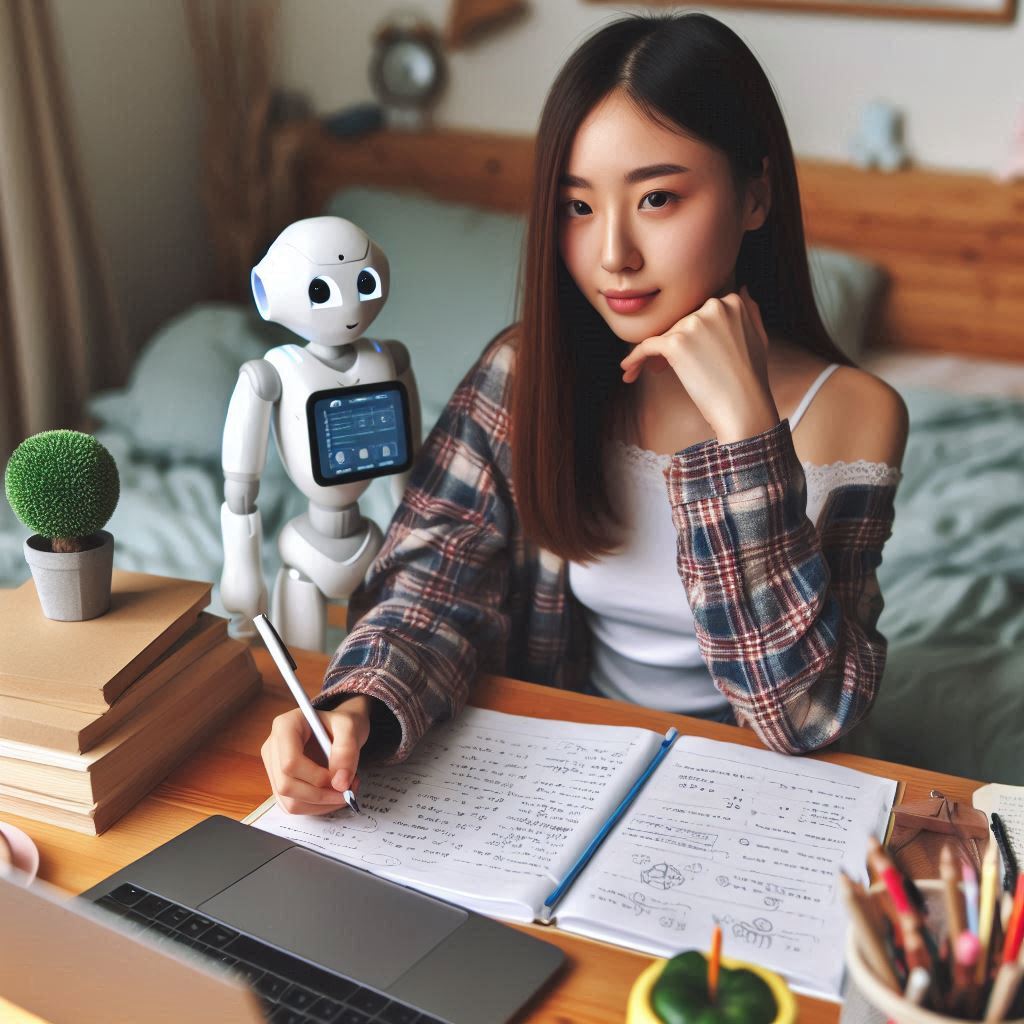

In seeking to address workload and in considering the use of AI in this process we need to be cautious of wanting to get things 100% right. Yes, this is our ideal solution but our current process is far from 100% right so surely we need only be able to match our current accuracy levels but with a reduced workload for teachers. Now it may be that the AQA research may present the answer in that “a pragmatic and effective way of improving marking reliability might be to have each script marked by a human marker and by software”. Maybe rather than looking for AI to do the marking for us, it is about working with AI to do the marking, using it as an assistant but ensuring human insight and checking is part of the process.

And I also note that the above applies not just to the marking of student work but also to the use of generative AI in the creation of parental reports, another area of significant workload for teachers. Here also an approach of accepting the frailties of our current approach then seeking to use AI to achieve at least the same level of consistency while reducing workload seems appropriate.

Maybe we need to stop taking about Artificial Intelligence and talk more about using AI to create Intelligent Assistants (IA)?

References:

A Review of the literature on marking and reliability (2005), Meadows. M. and Billington. L., National Assessment Agency, AQA

I attended the 2nd Bryanston Education Summit during the week just past, on 6th June. I had gone to in the inaugural event last year and I must admit to having found both years to be interesting and useful. The weather both years has been glorious which also helps to add to the event and the beautiful surroundings of the school. Here’s hoping Bryanston keep it up, and run another event next year.

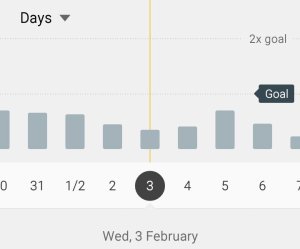

I attended the 2nd Bryanston Education Summit during the week just past, on 6th June. I had gone to in the inaugural event last year and I must admit to having found both years to be interesting and useful. The weather both years has been glorious which also helps to add to the event and the beautiful surroundings of the school. Here’s hoping Bryanston keep it up, and run another event next year. Microsoft’s Ian Fordham presented on the various things Microsoft are currently working on. I continue to find the areas Microsoft are looking at such as using AI to help individuals with accessibility and in addressing SEN to be very interesting indeed. I also was very interested by his mention of PowerBI as I see significant opportunities in using PowerBI within schools to build dashboards of data which are easy to interrogate and explore. This removes the need for complex spreadsheets of data allowing teachers and school leaders to do more with the data available however with less effort or time required. I believe this hits two key needs in relation to the data use in schools, being the need to do more with the vast amounts of data held with schools however the need to do it in a more efficient way such that teachers workload in relation to data can be reduced.

Microsoft’s Ian Fordham presented on the various things Microsoft are currently working on. I continue to find the areas Microsoft are looking at such as using AI to help individuals with accessibility and in addressing SEN to be very interesting indeed. I also was very interested by his mention of PowerBI as I see significant opportunities in using PowerBI within schools to build dashboards of data which are easy to interrogate and explore. This removes the need for complex spreadsheets of data allowing teachers and school leaders to do more with the data available however with less effort or time required. I believe this hits two key needs in relation to the data use in schools, being the need to do more with the vast amounts of data held with schools however the need to do it in a more efficient way such that teachers workload in relation to data can be reduced. I have written a number of times about my feelings with regards standardized testing. (You can read some of my previous postings here –

I have written a number of times about my feelings with regards standardized testing. (You can read some of my previous postings here –