I once again had the opportunity to speak in relation to AI in education earlier in the week, this time at the Elementary Technology and ANME event in Leeds. Now this time my presentation was very much focussed on the risks and challenges of AI in education rather than the benefits, leaving the benefits and some practical uses of AI to other presenters and to the workshop style sessions conducted in the afternoon. This post marks the first post of two looking at the risks and challenges I discussed during my session.

Bias

The potential for Bias in AI models and in particular in the current raft of Generative AI solutions was the first of the challenges I discussed. In order to illustrate the issue I made use of Midjourney asking it separately for a picture of a nurse in a hospital setting and then for a picture of a doctor, in both cases not stating the gender and allowing the AI to infer this. Unsurprisingly the AI produced 4 images of a female nurse and 4 images of a male doctor easily demonstrating an obvious gender bias. Now for me the bias here is obvious and therefore easily identified and corrected through an appropriate prompt asking for a mix of genders, but such bias are not always so identifiable. What about the potential for bias in learning materials presented to a student via an AI enabled learning platform, or the choice of text returned to a student by a generative AI solution? And if we cant identify the bias how are we to address it? I will however note at this point we also have to consider human bias, as it is unfair to expect an AI solution to be without bias when we developed the solution, provided the training data, etc, and we are not without bias ourselves.

Data Privacy

Lots of individuals, including myself, are already providing data to AI solutions but do we truly know how this data will be used, who it might be shared with, what additional data might be inferred from it, etc, and we need to know this now as it is currently, but also the future intentions of those we provide data to. The DfE makes clear that school personal data shouldn’t be provided to generative AI solutions however what if attempts are made to pseudonymize the data; What level of pseudonymization is appropriate? And then there is the issue of inferred data; I recently heard the suggestion that, if we fed all of our AI prompts back into an AI solution and asked it to provide a profile for the user, it would do a reasonable job of the task possibly identifying age, work sector and more. AI and generative AI offer a massive convenience, efficiency and speed gain however the trade off is giving more data away; Is this a fair trade off and one which we are consciously accepting?

Hallucinations

The issue of AI presenting made up information was another one which I found easy to recreate. I note this is often referred to a “hallucination” however I am not keen on the term as it anthropomorphises the current generative AI solutions we have when I still believe the solutions we currently have are still narrow in terms of their focus and therefore more akin to Machine Learning, a subset of the broader AI technologies. To demonstrate this issue I used a solution we have been working on which helps teachers generate parental reports, putting a list of teacher provided strengths and areas for improvement into readable sentences which teachers can then review and update. We simply failed to provide the AI with any strengths or areas for improvement. The AI however still went on to produce a report however in the absence of any teacher provided strengths or areas for improvement, it simply made them up. For me this highlights the fact that AI solutions cannot be considered as a replacement for humans, but instead are a tool or assistant to humans.

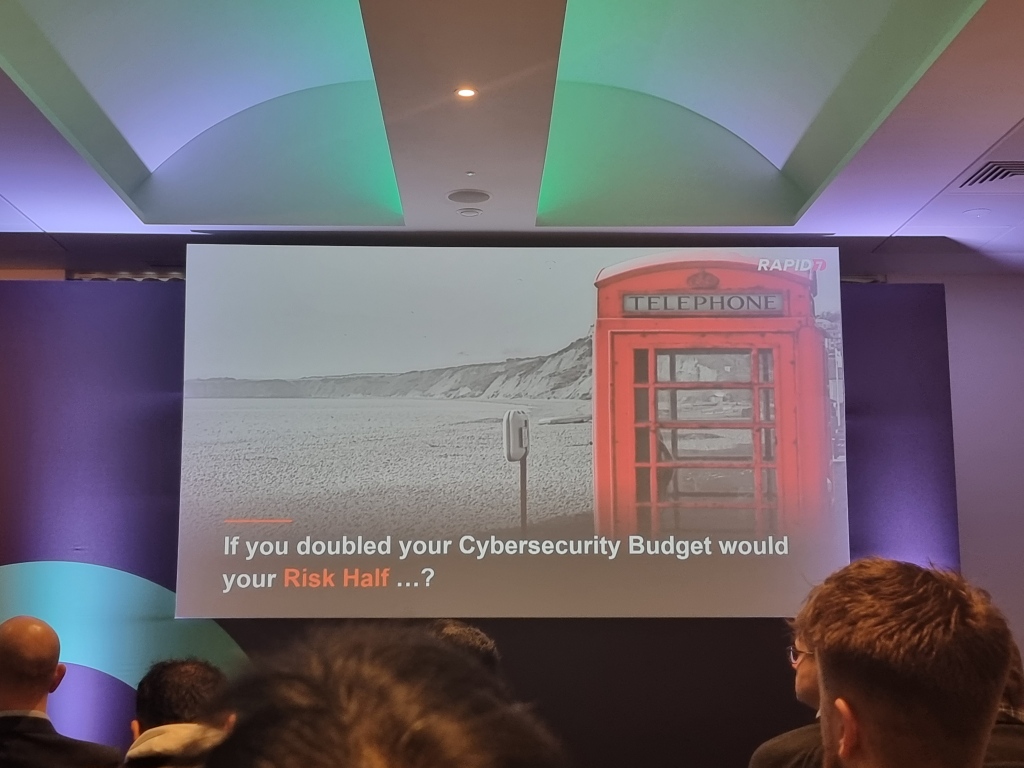

Cyber

The issue of cyber security or Information security and AI is quite a significant one from a variety of different perspectives. First there is the potential use of AI in attacks against organisations including schools. The existence of criminally focussed generative AI tools has already been reported in WormGPT and FraudGPT. Generative AI makes it easy to quickly create believable emails or usable code, independent of whether the purpose of the code is benign or if it for a phishing email or malware. Additionally there is the issue of AI as a new attack surface which cyber criminals might seek to leverage. This might be through the use of prompt injection to manipulate the outputs from AI solutions, possibly providing fake links or validating organisations or posts which are malicious or fictious. Attacks could also involve poisoning of the AI model itself, such that the models behaviour and responses are modified to suit the malicious ends of an attacker. And these are only a couple of implications in relation to AI and cyber security.

Conclusion

I think it is important to acknowledge that my outlook on AI in general and in education is a largely positive one, however I think it is important that we are realistic and accept the existence of a balance; a balance between the benefits and the risks and challenges, where to make use of the benefits we need to be at least aware and consider the possible balancing drawbacks and risks. This post therefore is about making sure we are aware of the risks, with my next post digging into a few further risks and challenges.

As Darren White put it in his presentation at the Embracing AI event, “Be bold but be responsible”