Early this month I had my second opportunity, post returning to the UK from the UAE, to contribute to an international conference event, this time the FutureShots event in Italy, not far outside Venice. Now I have already posted on my Gondola experience during this particular trip however I would now like to share some thoughts from the conference proper, and in particular the first day of the conference which was focussed on AI in education.

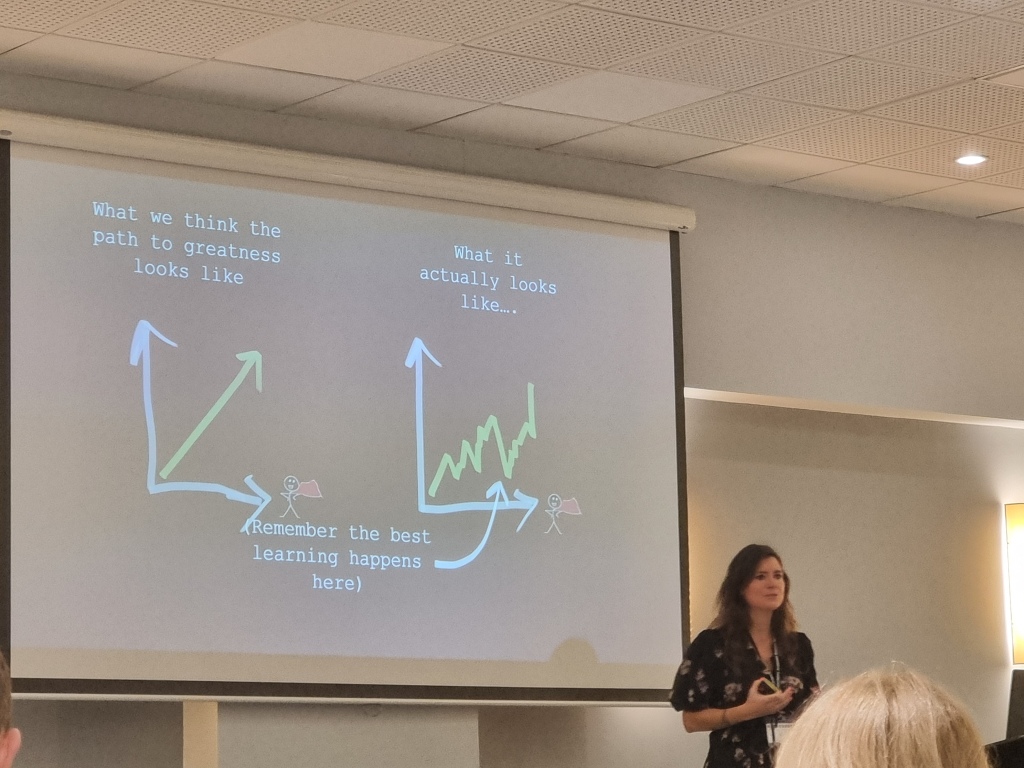

The keynote session was delivered by my friend and colleague from the ISC Digital Advisory Group, Laura Knight who delivered her usual flawless presentation with so many take aways, so let me try to summarise the ones which particularly resonated with me.

Binaries: I have long been concerned by binary arguments which seem to dominate lots of education discussions. In the case of AI things are no different with people either being doom and gloom, AI will end the world, or being evangelical about its ability to transform the world and education for the better. The reality, as I have often stated, is that reality lies somewhere in between with positives balanced out against negatives, challenges or drawbacks. AI isnt positive OR negative, but both positive and negative, and very dependent on the people using it, how they are using it and the task to which they are putting it, be it for good or for evil.

Trough of Disillusionment: Laura suggested that we may be passing the hype part of AI and moving into the “trough of disillusionment”. There has certainly been a lot of singing and dancing about AI in education and maybe this is wearing thin as generally the impact has been less than advertised, but I also note that the tech is improving and advancing quickly. Only in the last few weeks we have seen GPT 4o and similar advancements coming out of Google, so could it be that as we approach the trough of disillusionment with one iteration of generative AI, that a new iteration and new functionality appears throwing us back into awe and wonderment.

Now Laura delivered many more points which I took away from her session. This includes considering ownership of ideas, agency in the use of the tools, the importance of trust, integrity and truth, and much more. I will however save some of these for future blogs.

The final, and possibly biggest point I took away from the session related to the term “resilience” which is often stated as a characteristic we wish to foster in students. Laura raised concerns that although resilience is important it is not a state we can live in for any length of time. This loosely aligns with my concerns regarding the “do more”, “be more efficient” narrative which we encounter all so often, both in education and beyond. This “do more” with the same resources, pushes us increasingly into survival mode and “resilience” and this is something which is unsustainable over time. Laura suggested an alternative in “equanimity” and being comfortable and calmly coping and managing change. Now I am not 100% sure on this term yet, but I definitely agree with the sentiment that maybe we need to be a little more careful in over selling resilience as the solution to our challenges.

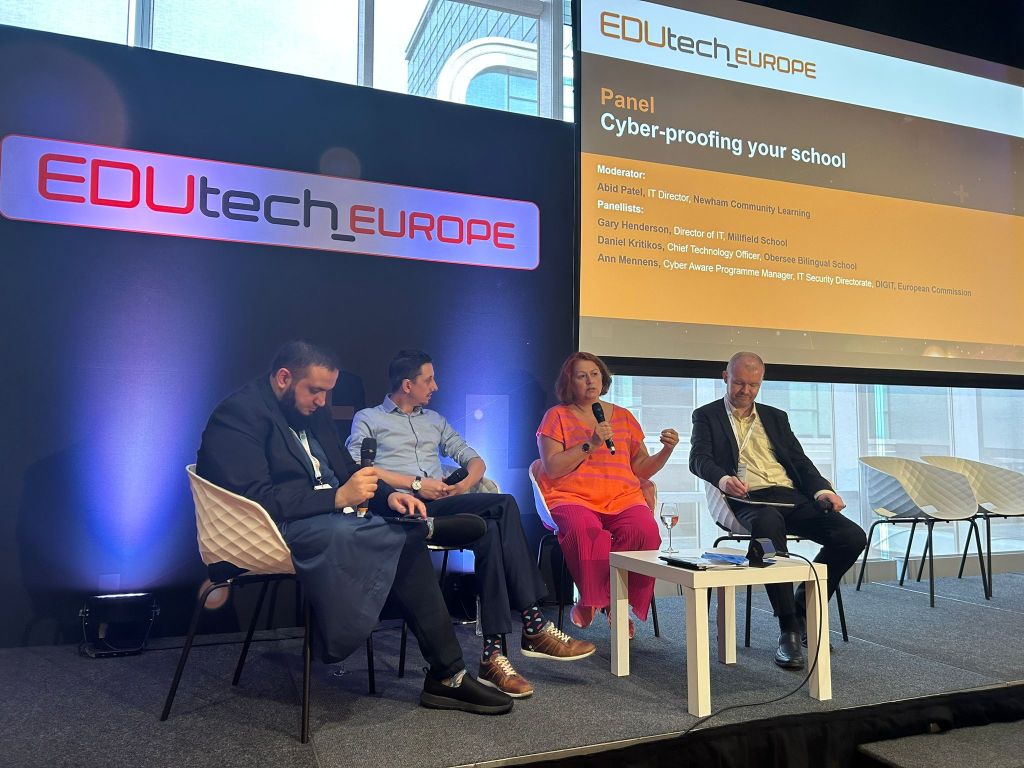

Next up was the panel session which I was involved in, chaired by Alessandro Bilotta, Content Director for EDUtech di Terrapinn along with Carlos Garriga Gamarra, CIO, IE University, Donatella Solda, Presidentessa, EdTech Italia, and Diego Pizzocaro, Head, H-FARM My School. Now I must admit I didn’t take any notes during this one, having been a bit too busy being involved in it but the session did pose some interesting questions such as what it means to be human in a world of AI and generative AI? If they key thing for us humans to do is the things AI cant do, what are those things? Now I think the key thing is the social side of life, the human to human interaction including non-verbal queues, so not a Teams or Zoom call. I used the term “human flourishing” as I think that sounds about right in principle although I will admit I havent quite bottomed out what human flourishing actually looks like; I suspect that’s a work in progress. Another question related to GDPR and AI, and whether GDPR was a road block. For me it isnt; We’ve been using satnav and google and social media for years without too many GDPR related questions. Data protection is important but good practice in terms of data protection is independent of whether you are looking at an AI based solution or a non-AI based solution; Its simply just good data protection practice.

EdTech startups were the next session of the conference with a number of startups each providing a short pitch of their product; I must admit to being impressed with some of the pitches not just due to the ideas, but due to the presenters delivering in English where their native language was generally Italian. Doing a short time bounded pitch is hard enough without having to give it in a second language. Now the fact that H-Farm has these startups as part of their campus is such a great idea as it encourages the co-creation of solutions rather than tech vendors creating what they think education wants, and then spending lots of money convincing educationalists that their product is the one and best solution.

We were not even through the morning at this point and I already had quite a few thoughts and ideas to take away and consider. My surface battery was depleting fast, an issue which was to impact me later on in the day but the day was going well. Now I have plenty more to share from the event, however am going to split things here for now and continue in a subsequent blog. If I was taking away a key thing from the morning it was the need to put the humans at the centre of AI use. It is about assisting humans and allowing humans to therefore focus on the things which humans do well, and that support “human flourishing”.